Websites That Allow Web Scraping: Top Picks 2026 - The GTM with Clay Blog

Claygent Builder: The easiest way to build, test, and deploy GTM Agents

Build production-ready Claygents in natural language with Sculptor. Test on real data for free, track versions, and deploy once across every workflow. All inside Clay.

How Clay Uses Clay Ads: From $250 to $25 CPL

See how Clay uses its own Ads feature to cut LinkedIn CPL from $250 to $25 and unlock Meta with enriched CRM audiences. No manual uploads needed.

HG Insights Corporate Hierarchy: GTM Precision in Clay

Use HG Insights corporate hierarchy data in Clay to clean CRMs, map parent-child accounts, and trigger expansion plays. See how it works.

Sales GTM Engineering: How Clay Built the Role From Scratch

Learn what sales GTM engineering is, how it collapses SDR, AE, and SE roles into one, and how Clay built and hires for this high-leverage function. See how it works.

How to Automate Inbound Lead Outreach: The Clay Playbook

Learn how to automate inbound lead outreach with enrichment, scoring, and personalized sequences. See the exact Clay workflow that runs without manual work.

demandDrive Joins Clay’s Partner Ecosystem as an Official Clay Studio Partner

demandDrive joins Clay’s partner ecosystem to help B2B teams turn account intelligence into pipeline and revenue with GTM engineering and automation.

B2B Sales Prospecting: 15 Strategies to Drive More Conversions

Master B2B sales prospecting with 15 proven strategies covering ICP building, multi-channel outreach, and list hygiene. Build a pipeline that converts.

AI Sales Assistants: 11 Ways to Accelerate Your Outbound

Discover 11 ways AI sales assistants automate lead research, enrichment, and email personalization. See how top B2B teams use them to accelerate outbound.

The Three Laws of GTM: How to Win in the AI Era

The three laws of GTM explain why uniqueness, saturation, and iteration speed determine who wins. Learn how AI changes the rules and what to do about it.

Best Work Email Finders by Segment: SMB vs. Enterprise

We tested 12 email finders across 4,700+ contacts to find the best work emails by segment. See accuracy, cost, and coverage winners for SMB and enterprise.

How Clay Converts Trial Users Into Customers With Automated Outreach

See how Clay uses automated outreach to convert trial users into customers, with enrichment, lead scoring, and personalized HubSpot campaigns. Learn how.

Best Mobile Phone Data Providers for B2B Sales Teams

We tested 9,806 numbers across 10 B2B mobile phone data providers. See which wins on accuracy, coverage, and cost for NAMER, EMEA, and APAC.

How to Build a Complete AI Outbound Sales Funnel

Learn how to build a complete AI outbound sales funnel—from account scoring to personalized outreach—using Clay waterfalls and automation. See how it works.

How to Get More Customers Using Outbound Sales: A Complete Guide

Learn how outbound sales works, who it's right for, and how to build a strategy from prospecting to closing. Covers cold calling, email, and more.

How to Automate 6 Cold Email Campaigns in One Clay Workflow

Learn how to automate 6 cold email campaigns from a single Clay table — with enrichment, AI classification, and deduplication built in. See how it works.

How Clay Identifies Tier 1 Accounts: A Three-Score System

See how Clay identifies tier 1 accounts using a three-score system: fit, engagement, and contract value. Learn how sales and marketing align on the same priorities.

Lead Scoring in Clay: A Step-by-Step Formula Guide

Learn how to build lead scoring formulas in Clay to prioritize your ICP leads by employee count, job postings, and more. See how it works.

How to Validate Cold Outbound Offers and Find Message-Market Fit

Learn how to validate cold outbound offers by finding message-market fit — from breaking down your value prop to testing with a phased email approach. See how it works.

Troubleshooting Outbound Sales and Prospecting: A Comprehensive Guide

Fix broken outbound sales campaigns with this guide. Diagnose open and reply rates, reduce no-shows, qualify prospects with MEDDIC, and optimize what's working.

Bulk Enrichment: Enrich Millions of CRM Records in Clay

Bulk enrichment lets Enterprise teams enrich millions of Salesforce records with firmographics, tech stack, and AI research — then write results back automatically.

Clay Templates: Automate, Customize, and Replicate Any GTM Workflow

Clay Templates let you replicate full GTM workflows in hours, not days. Automate prospecting from data scraping to AI messaging, free and fully customizable.

How to Optimize Your Credit Usage in Clay

Learn how to optimize your credit usage in Clay with conditional formulas, Clearbit waterfall lookups, and smarter enrichment workflows. Save credits fast.

AI for sales prospecting

Learn about how to use AI for sales prospecting in this comprehensive guide, including framework for creating AI prompts and examples of cold email templates using AI that real sales teams have used successfully to land clients. AI sales prospecting can save your team thousands of hours—and double or triple your positive response rates.

The Reverse Demo: How Clay Replaced Traditional B2B Sales Demos

A reverse demo lets prospects solve real problems live, guided by your rep. Learn how Clay used 100+ sessions to boost conversion, retention, and product quality.

Data Waterfalls: How to Maximize Contact Coverage with Clay

Data waterfalls query multiple providers in sequence so you only pay for matches. See how Clay pushes coverage from 30% to 80%+ without annual contracts.

How Clay Runs ABM Campaigns: A Step-by-Step Playbook

See how Clay runs ABM campaigns — scoring 300 accounts, personalizing mailers and landing pages, and automating SDR follow-up. Learn how.

How We Built Clay's GTM Engineering Function

See how Clay built its GTM engineering function with sprint-based delivery, founder-level reporting, and full sales automation. A practical inside look.

Best Personal Email Finder Tools: Tested and Ranked

We tested 5 personal email finder tools across 2,354 prospects. See accuracy, coverage, and pricing data — plus the waterfall order that hit 79% coverage.

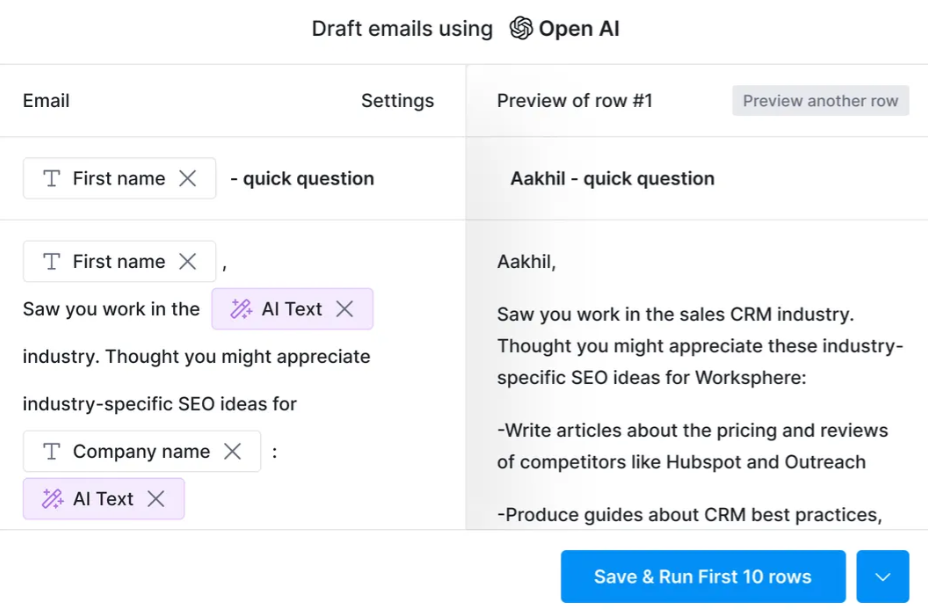

How to Use OpenAI to Write Cold Emails from Scratch with Clay

Learn how to use OpenAI to write personalized cold emails at scale with Clay. Set up the integration, craft better prompts, and boost deliverability.

How to Run a Personalized Demo Play at Scale with Clay

Learn how to automate a personalized demo play using Clay, Claygent, and AI enrichment to build custom mockups at scale. See how it works.

Automated Slide Deck Creation: How Clay Builds QBRs from Your Data

Clay's automated slide deck creation pulls from Snowflake, Salesforce, and Gong to build QBRs in minutes. Save 90+ hours per quarter. See how it works.

HG Insights + Clay: B2B Technographic and Firmographic Data

HG Insights surfaces deep technographic and firmographic data from billions of documents. Use it in Clay workflows to enrich accounts and power GTM. See how it works.

B2B Cold Email Deliverability: 21 Best Practices

Master B2B cold email deliverability with 21 proven best practices: domain setup, inbox warmup, authentication, and copy tips that keep you out of spam. Learn how.

Basics of Google Search Operators: A Practical Guide

Learn the basics of Google Search Operators and how to use them in Clay for prospecting, list building, and company research. See how it works.

AI Lead Generation: The Complete B2B Guide

Learn how AI lead generation automates list building, enrichment, and personalized outreach for B2B teams. Scale your pipeline without scaling headcount. See how it works.

Clay MCP: Ops-built workflows, consumable by reps

Clay MCP: Ops-built workflows, consumable by reps

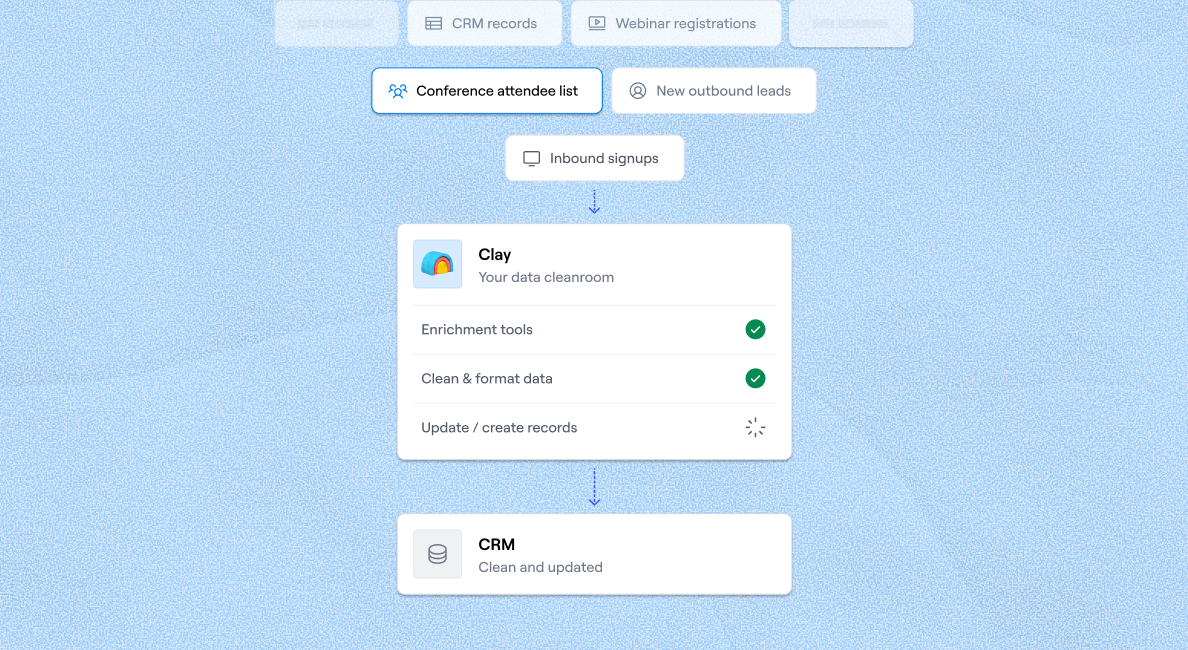

How to Manage and Enrich Inbound Leads Automatically

Learn how to manage and enrich inbound leads automatically using a four-phase workflow that scores, segments, and triggers outreach from one email. See how it works.

GTM Alpha: How Winning Teams Build a Competitive Edge

GTM alpha is the edge winning teams build with unique data and signal-based plays. Learn how to find hidden signals, run better plays, and outpace competitors.

Why Good CRM Data Matters and How Clay Helps

Poor CRM data kills outreach. Learn why CRM data coverage fails and how Clay's waterfall enrichment lifts coverage rates from 20% to 80%. See how it works.

How to Use Formulas in Clay: AI Generator and Manual Entry

Learn how to use formulas in Clay with the AI Formula Generator or manual entry. Transform and clean your data faster. See how it works.

GTM Engineering: What It Is, How It Works, and How to Hire

GTM engineering turns ops teams into revenue builders using AI and automation. Learn what GTM engineers do, how to structure the role, and how to hire one.

Formulas in Clay: A Beginner's Intro for Non-Engineers

Learn how to use formulas in Clay without coding. This intro covers conditional statements, combining columns, and auto-qualifying leads. Start in 30 minutes.

How Clay Uses Clay for SEO and AEO: 3 Systems That Scale

See how Clay uses Clay for SEO and AEO: automated content refresh, video-to-page conversion, and a custom AI visibility dashboard. Learn how.

Turn Web Visitors into Leads: A Warm Outbound Play for B2B Sales

Learn how to turn web visitors into leads using a warm outbound play for B2B sales — with RB2B, Clay, and Lemlist. See how it works.

How to Use Web Scraping to Enrich Your Data with Clay

Learn how to use web scraping to enrich your data without code. Clay's Claygent answers deep GTM research questions at scale. See how it works.

How to Create a Sales Prospect List in Minutes

Learn how to create your own sales prospect list in minutes using Clay. Pull from 40+ sources, enrich with ICP data, and export to your CRM. See how it works.

Best B2B Email List Providers: Tested and Ranked (2026)

We tested 8 B2B email list providers head-to-head. See accuracy results, per-email pricing, and how to waterfall providers for maximum coverage.

Outbound Sales Automation: How to 10x Pipeline Without More SDRs

Learn how outbound sales automation replaces manual SDR work, cuts cost per email by 100x, and scales pipeline without growing headcount. See how it works.

The Wake the Dead Play: Reactivate Closed-Lost Deals with Clay

The wake the dead play uses Clay + ChatGPT to send automated, personalized emails to closed-lost prospects. Restart stalled deals in a few steps. Learn how.

Three Tips to Guarantee Email Deliverability for Cold Outbound

Split volume, verify contacts, and personalize copy to guarantee email deliverability for cold outbound. Three actionable tips that keep you out of spam.

How Clay Uses Clay for Customer Support: 3 Real Workflows

See how Clay's customer support team uses Clay to enrich Intercom tickets, automate QA, and draft help articles. Real workflows, real results.

B2B Cold Email Copywriting: The Complete Guide

Master B2B cold email copywriting with proven templates, a research framework, and a checklist used to send 800k+ emails a month. Start writing emails that get replies.

Introducing Clay Functions

Build Your GTM Logic Once, Apply It Everywhere

Clay and Apollo Integration: Enrichment, Sequencing, and More

The Clay and Apollo integration unlocks 5X faster enrichment and direct sequencer API access. See how joint customers go from data to booked meetings.

The Many Lives of Spreadsheets: A History and What Comes Next

Explore the many lives of spreadsheets — from VisiCalc in 1979 to self-filling automation tools today. See how the no-code vision keeps evolving.

AI recruiting strategies

Learn our top AI recruiting workflows to help you identify, research, and reach out to qualified candidates for open roles. AI can eliminate manual work and help you reach out to—and land—better employees for your clients.

How to Hire a GTM Engineer: The Complete Guide

Learn how to hire a GTM engineer: when to make the hire, what skills to screen for, red flags to avoid, and where to find the best candidates. See how it works.

Inside Clay's GTM Engineering Lab: Plays, Principles, and Automation

See how Clay's GTM engineering lab turns internal problems into revenue plays using AI, automation, and data-driven principles. Learn how it works.

How to Build the Most Targeted Account Lists Possible

Generic tools leave bad-fit companies in your account list. Learn how to build targeted account lists using AI enrichment and real workflow examples in Clay.

Personalized Direct Mail at Scale: The Gifting Play with Clay

Learn how to run personalized direct mail campaigns using Clay — validate contacts, generate AI gift copy, and export to email. See how it works.

How to Set Up Your Full Inbound Sales Process on Clay

Learn how to set up your full inbound sales process on Clay — enrich leads, tag MQLs, and automate email campaigns from form to demo. See how it works.

AI-Enabled GTM for Private Equity: The Value Creation Playbook

Learn how AI-enabled GTM for private equity drives value creation across portfolios—from data quality to agentic workflows. See how it works.

Do More With Your Data: Clay's Post-Data-Provider Approach

Clay's post-data-provider approach combines 150+ providers, waterfall enrichment, and AI scraping to maximize data coverage. See how it works.

Google Maps Lead Generation for Niche Local Businesses

Learn how to use Google Maps lead generation to find niche local businesses, enrich owner contacts, and send personalized outreach at scale with Clay.

24 AI Email Personalization Examples for Cold Outreach (With Prompts)

Get 24 AI email personalization examples for cold outreach, with ChatGPT prompts you can run at scale in Clay. Learn how to write emails that actually convert.

How to Ace Your Follow-Ups: A Practical Sales Guide

Learn how to ace your follow-ups with value-driven outreach, personalization tips, multi-channel tactics, and automation tools that keep deals moving. See how it works.

How to Prioritize Your Waitlist with Lead Enrichment

Learn how to prioritize your waitlist using lead enrichment. Turn raw signups into qualified leads by company, title, and role — no long forms needed. See how.

B2B Cold Email Templates: Frameworks That Get Replies

Learn how to write B2B cold email templates that convert with a proven 5-part framework, follow-up strategy, and real examples. See how it works.

Audiences: now in Enterprise beta

Clay Audiences unifies your CRM, product data, and intent signals into one layer — so reps and agents can run precise, personalized GTM plays at scale.

The thinking behind our new pricing: our internal memo

Clay pricing memo: INTERNAL

Introducing Clay’s new pricing

Today, we’re launching a pricing update that reduces data costs, and simplifies and improves the value of our plans. Our goal is to have Clay be your default tool for GTM Engineering.

Clay partners with Lusha and Beauhurst to expand European data coverage

Lusha adds lookalike prospecting, contact enrichment, and signals in EMEA. Beauhurst adds private funding and corporate structure data in the UK and Germany.

Source your precise TAM from lookalikes you can trust with Ocean.io and Clay

Clay + Ocean now enable preview-based B2B lookalike discovery. Preview leads before committing credits and expand your TAM with greater precision.

Clay doubles down on supporting European GTM teams

Clay's waterfall enrichment delivers 2–3x more mobile phone coverage than leading solo providers across Europe. Plus new data partnerships, a London office, and timezone-aligned support.

In Nigeria, she built a life where money wouldn’t decide

Clay blog | In Nigeria, she built a life where money wouldn’t decide

Sculptor Analyst Mode: Turning Context-Rich Data Into Actionable GTM Insights

Gather business intelligence and share documents of this analysis directly from Sculptor

In a place where girls often choose between career or marriage, she carved her own path

Javeria Shah won the Clay Cup 2025 despite being denied a US visa and competing remotely from Pakistan. Learn how she transitioned from electronics engineering into GTM engineering and built her own business.

How we designed Sculpt

Our first conference, Sculpt, is where the analog soul of Clay met the digital mind of Clay.

Clay announces second employee tender offer in nine months at a $5B valuation

A rare repeat employee liquidity event, designed to give builders flexibility as Clay accelerates

Clay is now available as a connector in Claude

Bring Clay's contact databases, enrichment providers, and AI agents into your Claude workflow.

Sellers have a new AI edge: Clay in ChatGPT

Use Clay directly in ChatGPT to find the right buyers, research people and companies, and draft personalized outbound. One conversation, powered by live GTM data.

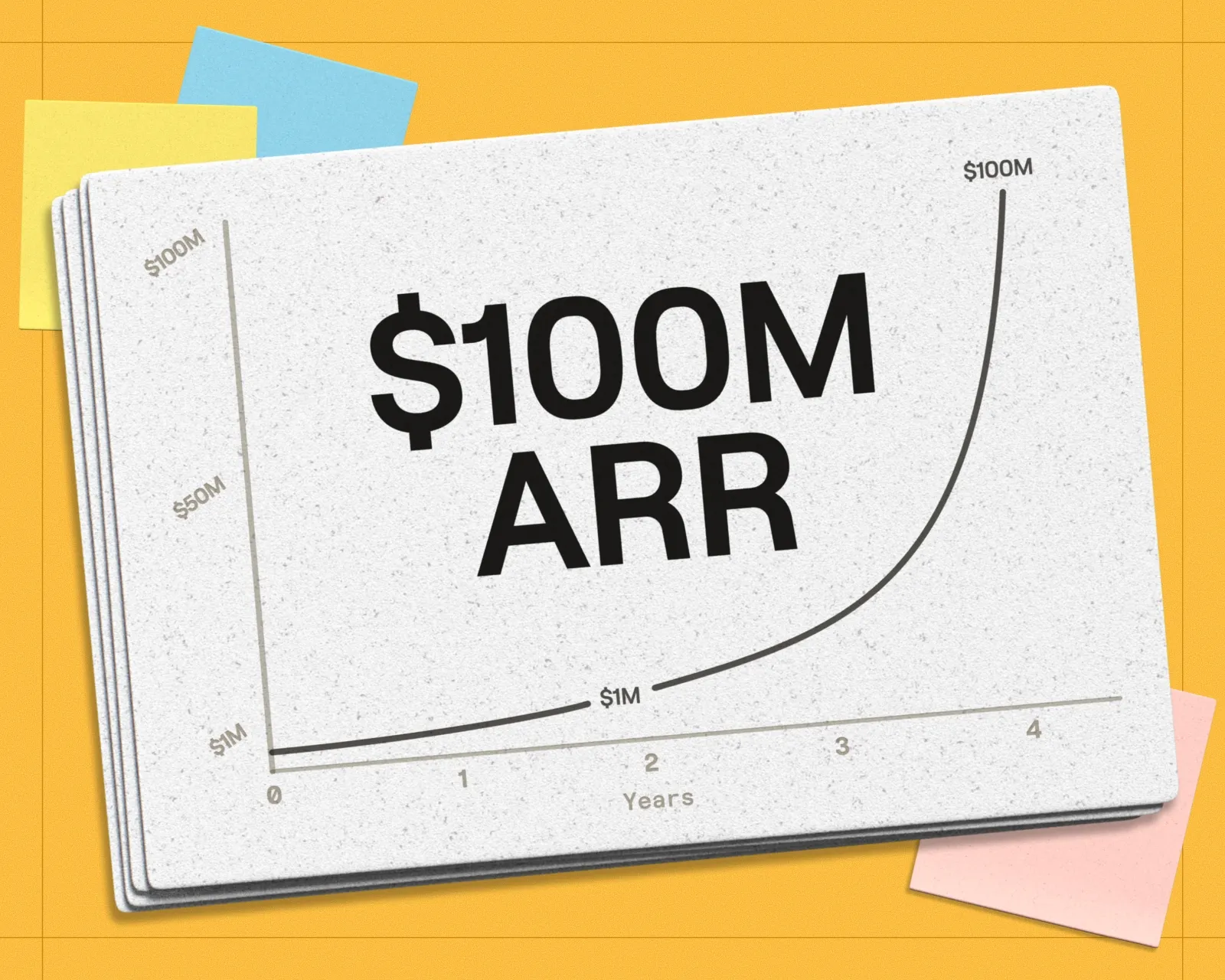

Clay reaches $100M ARR

Clay has crossed $100M ARR, growing from $1M to $100M in two years after six years of foundational product work. The milestone reflects durable customer adoption, efficient growth, and an ecosystem of GTM builders using Clay to power their business.

Clay Certifications: Turning mastery into credentials that matter

The Clay education team has built a certification program that runs entirely on Clay and gives users credentials that actually matter

Mobile Phone Verification Methodology

Clay has partnered with The Kiln to setup a series of large-scale data test across mobile phone, work email, personal email, email verification, and more. Below, we explain the approach to these tests.

Work Email Verification Methodology

Clay has partnered with The Kiln to setup a series of large-scale data test across mobile phone, work email, personal email, email verification, and more. Below, we explain the approach to these tests.

Stop Guessing, Start Analyzing: How Sculptor Turns Your GTM Data Into Your Competitive Advantage

Analyze your GTM data with Sculptor to turn fragmented information into actionable insight.

Find and outreach local businesses with Openmart and Clay Sequencer

Get the right contacts for local businesses without stitching together multiple tools or wasting valuable time on setup instead of selling.

Announcing Web Intent

Use Website Intent in Clay to see which companies visit your site, track engagement, and trigger personalized GTM plays. Turn website traffic into real buyer intent data.

How Clay Uses Clay: Conversational Data

How we use Clay to mine millions of pages of call transcripts to generate revenue, and how you can use it too.

Sculpting GTM’s future with six major launches

Today at Sculpt, we're launching six major features that will help teams turn any growth idea into reality faster.

Introducing Claygent Navigator

A new Claygent model that can use a browser to take actions and extract information from webpages.

Announcing the Clay Partner Program

The Clay Partner Program is to a partner, what a toolbox is to an artist. It keeps essential resources within reach and grows more sophisticated as your expertise develops. We've designed everything around one simple principle: helping you grow your business as Clay grows.

Introducing GPT-5 in Claygent: sharper research, stronger formulas, better outbound

GPT-5 is now a model option across Clay, bringing the best research and conversational writing we've ever shipped to your GTM workflows.

Clay Series C announcement. The GTM engineering era begins now

We raised a $100M Series C at a $3.1B valuation to power GTM engineering!

Claygent surpasses 1 billion runs

The world's most loved AI research agent in GTM has passes a huge milestone at 1 billion runs.

Announcing Sculpt: Clay’s first annual user conference

Join us for Sculpt, Clay’s first annual user conference on Sept 17 in San Francisco where GTM leaders build AI workflows, share creative tactics, and get early access to new features.

Announcing custom signals at Clay

Clay's new custom signals platform helps sales teams track unique data changes that indicate buying opportunities. Turn any data point into a sales signal, enrich with context, and automate personalized outreach to find GTM alpha your competitors miss.

Clay announces employee tender offer led by Sequoia at $1.5B valuation

Clay allows employees to sell vested shares for immediate liquidity through a $20M tender offer at a $1.5B valuation. With 10x revenue growth in 2022-2023 and serving 8,000+ customers including OpenAI and Hubspot, Clay continues to change how businesses approach go-to-market strategies with their AI agent Claygent.

Create personalized presentations at scale with Clay and Google Slides

Automate personalized sales decks with Clay’s Google Slides integration. Instantly generate tailored presentations for leads, customers, QBRs, and internal updates. Use one template to create hundreds of presentations at scale.

Turn Gong conversations into automated GTM workflows

Clay now integrates with Gong—turn messy call transcripts into powerful automations in Salesforce, HubSpot, Notion, Slack, Google Sheets, and 100+ other integrations.

Product

Use Cases

Solutions

Resources

Company

Pricing

Features

Additional

How Clay uses Clay

LinkedIn + Meta Ads on Autopilot

CRM enrichment

Keep your CRM clean with the highest quality data

BY TEAM

BY STAGE

BY CUSTOMERS

Vanta

Link long form description will go in this slot here.

depthfirst

Link long form description will go in this slot here.

ElevenLabs

Link long form description will go in this slot here.

Exit Five

Link long form description will go in this slot here.

AlertMedia

Link long form description will go in this slot here.

Intercom

Grew their outbound-sourced pipeline by +140%

START GROWING

DISCOVER

Community

PARTNER WITH US

Clay Commnity

In Nigeria, she built a life where money wouldn’t decide

OUR COMPANY

GET IN TOUCH

SOCIALS

Article – NY Times

Clay allows employees to sell shares at a $5b valuation.

Websites That Allow Web Scraping: How to Find and Use Them

While many tools offer features like CAPTCHA solver and IP rotation for collecting data from websites that prohibit data scraping, using them is not recommended. By signing up on any website, you agree to their terms of service and policies, and breaking them could lead to banning or even legal actions.

To help you scrape data ethically, this guide will teach you how to zero in on websites that allow web scraping. You'll also find examples of proven pro-scraping sites, as well as a recommendation for a web scraping tool that can help you extract data seamlessly and responsibly.

TL;DR

- You can verify whether a website allows scraping by checking its robots.txt file, HTTP headers, terms of service, and any Open Data initiative mentions.

- Many websites across finance, sports, weather, public databases, job boards, and travel actively permit scraping or provide official APIs for it.

- Public data is generally safe to scrape; personally identifiable information (PII) is subject to GDPR and CCPA restrictions, and copyrighted data requires explicit permission.

- Tools like Clay combine web scraping with waterfall enrichment across 150+ data providers, letting you extract, enrich, and act on data in one workflow.

Why Do Websites Allow Web Scraping?

While some websites and platforms prohibit scraping to protect their users' privacy, extracting publicly available data is still legal, as long as it complies with the respective country's laws and regulations. Luckily, many websites permit scraping for their own benefits, including:

6 Ways To Identify Websites That Allow Web Scraping

To ensure you're scraping data ethically, take the following steps to verify each platform's stance on data scraping:

1. Read the Robots.txt File

A robots.txt file is the root of a website that tells scrapers whether they can extract data and which pages they can access.

You can find the file by adding robots.txt to the end of the domain of the website you want to scrape. For example:

If a website doesn't have a robots.txt file or hasn't specified otherwise, it may implicitly allow file scraping. Still, to stay on the safe side, consider the other indicators we discuss in this guide.

That said, web scrapers are not obligated to follow the instructions in the robots.txt file, but it should help you understand where the website stands on scraping.

2. Look for a Scraping API

Some websites that allow web scraping provide an official scraping API for users to integrate. Some of the popular solutions include:

By offering a scraping API, the website can control the volume and frequency of requests to avoid overloading its servers. It also helps enhance data security through encryption and access controls, ensures data integrity by offering it in reliable formats, and eliminates data errors inherent in using web scrapers. 💻

To check if a website has a scraping API:

3. Read the Terms of Service

Sites that allow web scraping may explicitly state it in their terms and service, alongside guidelines on how to scrape and use the data responsibly.

You can check by opening the terms of service page and searching for words like scraping, data use, and crawling. If anything is unclear, you can contact the support team for consultation. 🗣️

4. Inspect HTTP Headers

Some websites set the X-Robots-Tag HTTP header to control the behavior of scrapers at a more detailed level than using the robots.txt file. While primarily designed to instruct search engine crawlers on how to interact with a website's resources, it can also work with web scrapers.

Like the robots.txt file, the X-Robots-Tag doesn't block access to the website's resources. Its effectiveness depends on web scrapers respecting its directives. Still, the header can help you understand a website's stand on web scraping.

Here's how to check the HTTP header of a website using your browser's developer tools:

You can also check by installing the Web Developer plugin or Robots Exclusion Checker extension in Chrome or by creating a simple Python script. ⌨️

5. Assess the Type of Data on the Website

There are three general types of website data: public, personal, and copyrighted. Here's what each of them may look like:

In the context of scraping, public data is the information you can access without a login. A website featuring this type of information is generally safe for scraping unless it explicitly prohibits it in its robots.txt file, HTTP headers, or terms of use.

On the other hand, there are no universal guidelines on scraping personally identifiable information (PII). Each jurisdiction has different regulations. The two most notable are the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA), which prohibit PII collection, storage, and use without a lawful reason and the owner's explicit consent.

Websites with copyrighted data can prohibit scraping depending on how you plan to use it. Copyrighted data is owned by individuals or businesses with explicit control over its collection and reproduction. 📁

💡 Bonus read: Check out these articles that offer solutions for scraping:

6. Check for Open Data Initiatives

Some websites participate in Open Data initiatives and provide free access to datasets anyone can freely use and redistribute. They usually have explicit permissions for scraping and exporting data, which you can find in the terms and conditions.

To check if a website embraces the open data ethos, look for mentions of the Open Data on the About Us page.

💡 Tip: If the six methods above still don't clarify the website's stance, contact the admin or owner for permission or clarification on the approved data scraping methods or channels.

Which Websites Allow Web Scraping?

Below, you'll find recommendations of websites to scrape data from, grouped into the following categories:

Finance 💲

Websites that provide information and tools related to finances, such as real-time and historical market data and financial news, often allow scraping. Examples include:

Sports ⚽

Sports websites that provide comprehensive sports coverage can allow fans, analysts, and researchers to scrape data like league standings and performance stats for players and teams. Examples include:

Weather ☔

Weather websites have data like forecasts and current conditions, which can be valuable in a wide range of industries, including sports, mining, agriculture, and construction. Examples include:

Public Databases 📂🌐

Public databases contain data on crime, health, employment, regulations, demographics, and other public records, which are free to scrape, reuse, and redistribute without restrictions. Examples include:

Job Boards 👷

Job boards allow web scraping data like job titles and descriptions and company names. Examples include:

Travel and Hospitality ✈️

Travel and hospitality websites can allow scraping prices, flights, hotels, and destinations to help with travel planning and booking. Examples include:

Best Practices When Scraping Websites That Allow Web Scraping

Once you've found the website you want to scrape, consider these tips to extract data effectively while remaining respectful of the website's resources:

How To Extract High-Quality Data From Sites That Allow Web Scraping

While manually extracting data is possible, large-scale projects require a web data scraper as it's more practical than opening each page and copy-pasting the information you need. With a robust data scraper, you can extract high-quality data from thousands, or even millions, of pages quickly and efficiently.

That said, not all tools are created equal, so look for one that ticks the following boxes:

To minimize costs, look for a data scraper with enrichment features to collect and consolidate data from multiple sources and ensure your results are accurate, current, and relevant. Such a centralized solution can help you save time, money, and human resources. 💸

If you're looking for a recommendation on a tool that lets you extract, format, and enrich high-quality data consistently, Clay is the way to go. It taps into more than 50 databases to find the information you need, as discussed in detail below. 👇

How Clay Can Help You Scrape High-Quality Data

Clay is a comprehensive data providing and enrichment platform with robust scraping capabilities. It offers two native and automated features that let you collect data from the web:

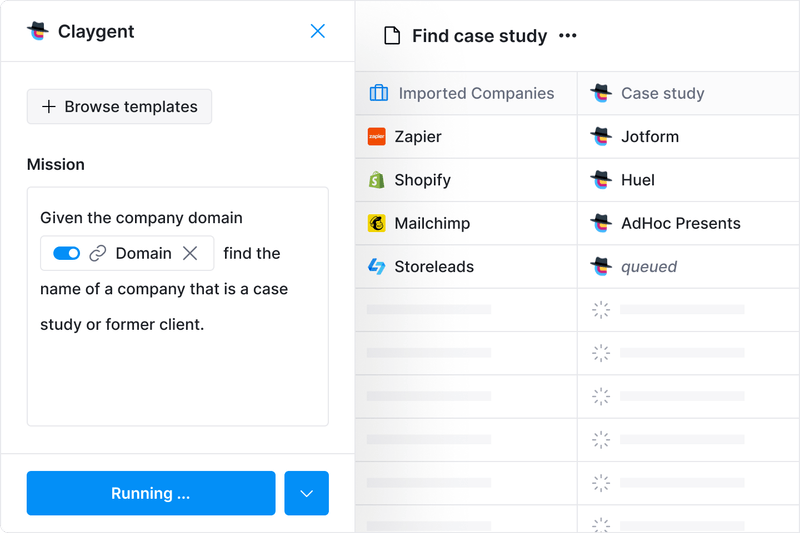

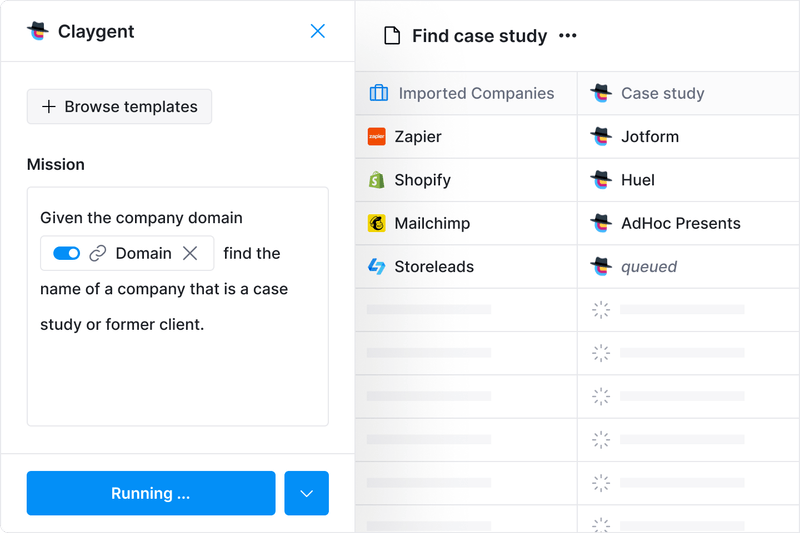

Claygent is an AI data scraper that lets you scrape websites through prompts. All you have to do is tell the assistant what data points you need and where to get them from. Claygent will find and organize the results for you in a few seconds.

You can use it to find details about people and companies without lifting a finger, making it the ideal scraper if you are new to scraping or want a hands-off way to extract data. 💪

If you want to scrape data as you browse, use Clay's Chrome Extension. It collects info automatically when you open a page. You can also create custom data scraping recipes to meet your unique needs without coding. 🖱️

While impressive, web scraping is just the tip of the iceberg when it comes to Clay's functionality. The platform offers numerous data enrichment tools to fill in any missing points.

Maximize Data Coverage With Clay

After scraping, leverage Clay's state-of-the-art data enrichment features to improve the quality of your results. Unlike typical web scrapers, Clay integrates with dozens of data providers, giving you unrivaled coverage and reliability.

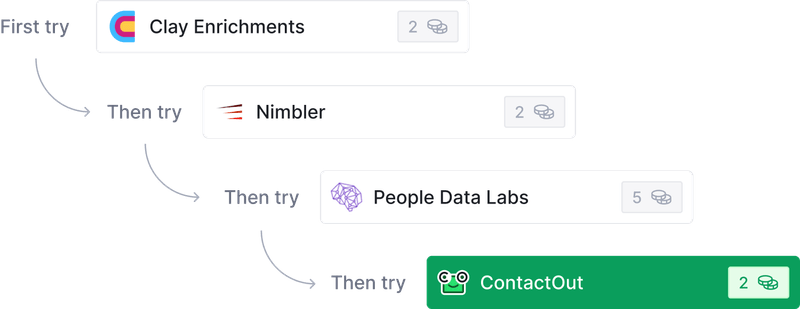

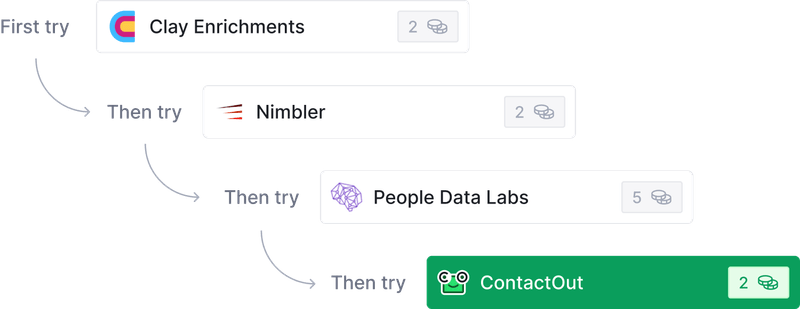

To enrich your results, Clay lets you choose the data providers to tap into and uses waterfall enrichment to check each database one by one until it finds the info you need. You only pay for the information you find, which helps avoid cost overruns. 💵

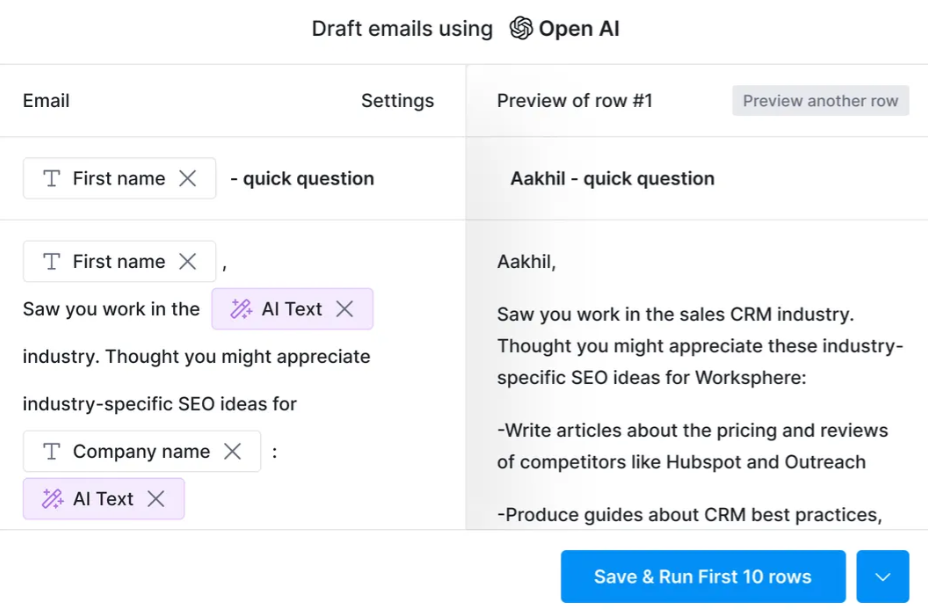

After enriching your data, you can push it to your CRM, export it as a CSV file, or, if you are running an outbound campaign, use the AI email drafter to create hyper-personalized emails for each lead. 📧

Find Clay's Endless Possibilities

The impressive scraping and enrichment features are only the beginning. Clay has over 100 integrations, many of which can simplify the process of extracting data. The table below highlights some of them:

The platform also offers dozens of templates that come with pre-built Clay tables and automations to help you perform various scraping tasks, such as:

Many individuals and marketing and sales pros who've tried Clay are in awe of what it can do. Here's what one of them had to say about it:

Create Your Clay Account

You can explore Clay's features in three quick steps:

Clay offers a free forever plan that lets you test the platform. If you like what you see, opt for one of the four paid plans outlined in the table below:

If you want to know more about the platform and what it can do, check out Clay University and join the Slack community. To stay updated on the latest tips and features, sign up for Clay's newsletter. 💌

Frequently Asked Questions

Is web scraping legal?

Scraping publicly available data is generally legal, provided it complies with the laws of the relevant jurisdiction. However, scraping personally identifiable information (PII) without consent may violate GDPR or CCPA, and scraping copyrighted content without permission can create legal exposure. Always check a site's terms of service before scraping.

How do I know if a website allows web scraping?

Check the site's robots.txt file, review its terms of service for mentions of scraping or crawling, inspect HTTP headers for X-Robots-Tag directives, and look for an official API. If the site participates in Open Data initiatives, it will typically say so on its About Us page. When in doubt, contact the site owner directly.

What is the difference between scraping public data and personal data?

Public data is information accessible without a login, such as news articles, public profiles, and product listings. It is generally safe to scrape unless explicitly prohibited. Personal data includes names, addresses, phone numbers, and biometric data. Scraping PII is subject to strict regulations under GDPR and CCPA and requires a lawful basis and the owner's explicit consent.

Which types of websites most commonly allow web scraping?

Finance sites (Yahoo Finance, Google Finance), sports reference sites, weather platforms, public government databases, job boards, and travel booking sites are among the most common categories that permit scraping. Many provide official APIs to make structured data access even easier.

📚 Keep reading: For more interesting content, check out these articles on scraping social media platforms, including:

While many tools offer features like CAPTCHA solver and IP rotation for collecting data from websites that prohibit data scraping, using them is not recommended. By signing up on any website, you agree to their terms of service and policies, and breaking them could lead to banning or even legal actions.

To help you scrape data ethically, this guide will teach you how to zero in on websites that allow web scraping. You'll also find examples of proven pro-scraping sites, as well as a recommendation for a web scraping tool that can help you extract data seamlessly and responsibly.

TL;DR

- You can verify whether a website allows scraping by checking its robots.txt file, HTTP headers, terms of service, and any Open Data initiative mentions.

- Many websites across finance, sports, weather, public databases, job boards, and travel actively permit scraping or provide official APIs for it.

- Public data is generally safe to scrape; personally identifiable information (PII) is subject to GDPR and CCPA restrictions, and copyrighted data requires explicit permission.

- Tools like Clay combine web scraping with waterfall enrichment across 150+ data providers, letting you extract, enrich, and act on data in one workflow.

Why Do Websites Allow Web Scraping?

While some websites and platforms prohibit scraping to protect their users' privacy, extracting publicly available data is still legal, as long as it complies with the respective country's laws and regulations. Luckily, many websites permit scraping for their own benefits, including:

6 Ways To Identify Websites That Allow Web Scraping

To ensure you're scraping data ethically, take the following steps to verify each platform's stance on data scraping:

1. Read the Robots.txt File

A robots.txt file is the root of a website that tells scrapers whether they can extract data and which pages they can access.

You can find the file by adding robots.txt to the end of the domain of the website you want to scrape. For example:

If a website doesn't have a robots.txt file or hasn't specified otherwise, it may implicitly allow file scraping. Still, to stay on the safe side, consider the other indicators we discuss in this guide.

That said, web scrapers are not obligated to follow the instructions in the robots.txt file, but it should help you understand where the website stands on scraping.

2. Look for a Scraping API

Some websites that allow web scraping provide an official scraping API for users to integrate. Some of the popular solutions include:

By offering a scraping API, the website can control the volume and frequency of requests to avoid overloading its servers. It also helps enhance data security through encryption and access controls, ensures data integrity by offering it in reliable formats, and eliminates data errors inherent in using web scrapers. 💻

To check if a website has a scraping API:

3. Read the Terms of Service

Sites that allow web scraping may explicitly state it in their terms and service, alongside guidelines on how to scrape and use the data responsibly.

You can check by opening the terms of service page and searching for words like scraping, data use, and crawling. If anything is unclear, you can contact the support team for consultation. 🗣️

4. Inspect HTTP Headers

Some websites set the X-Robots-Tag HTTP header to control the behavior of scrapers at a more detailed level than using the robots.txt file. While primarily designed to instruct search engine crawlers on how to interact with a website's resources, it can also work with web scrapers.

Like the robots.txt file, the X-Robots-Tag doesn't block access to the website's resources. Its effectiveness depends on web scrapers respecting its directives. Still, the header can help you understand a website's stand on web scraping.

Here's how to check the HTTP header of a website using your browser's developer tools:

You can also check by installing the Web Developer plugin or Robots Exclusion Checker extension in Chrome or by creating a simple Python script. ⌨️

5. Assess the Type of Data on the Website

There are three general types of website data: public, personal, and copyrighted. Here's what each of them may look like:

In the context of scraping, public data is the information you can access without a login. A website featuring this type of information is generally safe for scraping unless it explicitly prohibits it in its robots.txt file, HTTP headers, or terms of use.

On the other hand, there are no universal guidelines on scraping personally identifiable information (PII). Each jurisdiction has different regulations. The two most notable are the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA), which prohibit PII collection, storage, and use without a lawful reason and the owner's explicit consent.

Websites with copyrighted data can prohibit scraping depending on how you plan to use it. Copyrighted data is owned by individuals or businesses with explicit control over its collection and reproduction. 📁

💡 Bonus read: Check out these articles that offer solutions for scraping:

6. Check for Open Data Initiatives

Some websites participate in Open Data initiatives and provide free access to datasets anyone can freely use and redistribute. They usually have explicit permissions for scraping and exporting data, which you can find in the terms and conditions.

To check if a website embraces the open data ethos, look for mentions of the Open Data on the About Us page.

💡 Tip: If the six methods above still don't clarify the website's stance, contact the admin or owner for permission or clarification on the approved data scraping methods or channels.

Which Websites Allow Web Scraping?

Below, you'll find recommendations of websites to scrape data from, grouped into the following categories:

Finance 💲

Websites that provide information and tools related to finances, such as real-time and historical market data and financial news, often allow scraping. Examples include:

Sports ⚽

Sports websites that provide comprehensive sports coverage can allow fans, analysts, and researchers to scrape data like league standings and performance stats for players and teams. Examples include:

Weather ☔

Weather websites have data like forecasts and current conditions, which can be valuable in a wide range of industries, including sports, mining, agriculture, and construction. Examples include:

Public Databases 📂🌐

Public databases contain data on crime, health, employment, regulations, demographics, and other public records, which are free to scrape, reuse, and redistribute without restrictions. Examples include:

Job Boards 👷

Job boards allow web scraping data like job titles and descriptions and company names. Examples include:

Travel and Hospitality ✈️

Travel and hospitality websites can allow scraping prices, flights, hotels, and destinations to help with travel planning and booking. Examples include:

Best Practices When Scraping Websites That Allow Web Scraping

Once you've found the website you want to scrape, consider these tips to extract data effectively while remaining respectful of the website's resources:

How To Extract High-Quality Data From Sites That Allow Web Scraping

While manually extracting data is possible, large-scale projects require a web data scraper as it's more practical than opening each page and copy-pasting the information you need. With a robust data scraper, you can extract high-quality data from thousands, or even millions, of pages quickly and efficiently.

That said, not all tools are created equal, so look for one that ticks the following boxes:

To minimize costs, look for a data scraper with enrichment features to collect and consolidate data from multiple sources and ensure your results are accurate, current, and relevant. Such a centralized solution can help you save time, money, and human resources. 💸

If you're looking for a recommendation on a tool that lets you extract, format, and enrich high-quality data consistently, Clay is the way to go. It taps into more than 50 databases to find the information you need, as discussed in detail below. 👇

How Clay Can Help You Scrape High-Quality Data

Clay is a comprehensive data providing and enrichment platform with robust scraping capabilities. It offers two native and automated features that let you collect data from the web:

Claygent is an AI data scraper that lets you scrape websites through prompts. All you have to do is tell the assistant what data points you need and where to get them from. Claygent will find and organize the results for you in a few seconds.

You can use it to find details about people and companies without lifting a finger, making it the ideal scraper if you are new to scraping or want a hands-off way to extract data. 💪

If you want to scrape data as you browse, use Clay's Chrome Extension. It collects info automatically when you open a page. You can also create custom data scraping recipes to meet your unique needs without coding. 🖱️

While impressive, web scraping is just the tip of the iceberg when it comes to Clay's functionality. The platform offers numerous data enrichment tools to fill in any missing points.

Maximize Data Coverage With Clay

After scraping, leverage Clay's state-of-the-art data enrichment features to improve the quality of your results. Unlike typical web scrapers, Clay integrates with dozens of data providers, giving you unrivaled coverage and reliability.

To enrich your results, Clay lets you choose the data providers to tap into and uses waterfall enrichment to check each database one by one until it finds the info you need. You only pay for the information you find, which helps avoid cost overruns. 💵

After enriching your data, you can push it to your CRM, export it as a CSV file, or, if you are running an outbound campaign, use the AI email drafter to create hyper-personalized emails for each lead. 📧

Find Clay's Endless Possibilities

The impressive scraping and enrichment features are only the beginning. Clay has over 100 integrations, many of which can simplify the process of extracting data. The table below highlights some of them:

The platform also offers dozens of templates that come with pre-built Clay tables and automations to help you perform various scraping tasks, such as:

Many individuals and marketing and sales pros who've tried Clay are in awe of what it can do. Here's what one of them had to say about it:

Create Your Clay Account

You can explore Clay's features in three quick steps:

Clay offers a free forever plan that lets you test the platform. If you like what you see, opt for one of the four paid plans outlined in the table below:

If you want to know more about the platform and what it can do, check out Clay University and join the Slack community. To stay updated on the latest tips and features, sign up for Clay's newsletter. 💌

Frequently Asked Questions

Is web scraping legal?

Scraping publicly available data is generally legal, provided it complies with the laws of the relevant jurisdiction. However, scraping personally identifiable information (PII) without consent may violate GDPR or CCPA, and scraping copyrighted content without permission can create legal exposure. Always check a site's terms of service before scraping.

How do I know if a website allows web scraping?

Check the site's robots.txt file, review its terms of service for mentions of scraping or crawling, inspect HTTP headers for X-Robots-Tag directives, and look for an official API. If the site participates in Open Data initiatives, it will typically say so on its About Us page. When in doubt, contact the site owner directly.

What is the difference between scraping public data and personal data?

Public data is information accessible without a login, such as news articles, public profiles, and product listings. It is generally safe to scrape unless explicitly prohibited. Personal data includes names, addresses, phone numbers, and biometric data. Scraping PII is subject to strict regulations under GDPR and CCPA and requires a lawful basis and the owner's explicit consent.

Which types of websites most commonly allow web scraping?

Finance sites (Yahoo Finance, Google Finance), sports reference sites, weather platforms, public government databases, job boards, and travel booking sites are among the most common categories that permit scraping. Many provide official APIs to make structured data access even easier.

📚 Keep reading: For more interesting content, check out these articles on scraping social media platforms, including:

More Articles

Claygent Builder: The easiest way to build, test, and deploy GTM Agents

How Clay Uses Clay Ads: From $250 to $25 CPL

HG Insights Corporate Hierarchy: GTM Precision in Clay

Sales GTM Engineering: How Clay Built the Role From Scratch

How to Automate Inbound Lead Outreach: The Clay Playbook

demandDrive Joins Clay’s Partner Ecosystem as an Official Clay Studio Partner

B2B Sales Prospecting: 15 Strategies to Drive More Conversions

AI Sales Assistants: 11 Ways to Accelerate Your Outbound

The Three Laws of GTM: How to Win in the AI Era

Best Work Email Finders by Segment: SMB vs. Enterprise

How Clay Converts Trial Users Into Customers With Automated Outreach

Best Mobile Phone Data Providers for B2B Sales Teams

How to Build a Complete AI Outbound Sales Funnel

How to Get More Customers Using Outbound Sales: A Complete Guide

How to Automate 6 Cold Email Campaigns in One Clay Workflow

How Clay Identifies Tier 1 Accounts: A Three-Score System

Lead Scoring in Clay: A Step-by-Step Formula Guide

How to Validate Cold Outbound Offers and Find Message-Market Fit

Troubleshooting Outbound Sales and Prospecting: A Comprehensive Guide

Bulk Enrichment: Enrich Millions of CRM Records in Clay

Clay Templates: Automate, Customize, and Replicate Any GTM Workflow

How to Optimize Your Credit Usage in Clay

AI for sales prospecting

The Reverse Demo: How Clay Replaced Traditional B2B Sales Demos

Data Waterfalls: How to Maximize Contact Coverage with Clay

How Clay Runs ABM Campaigns: A Step-by-Step Playbook

How We Built Clay's GTM Engineering Function

Best Personal Email Finder Tools: Tested and Ranked

How to Use OpenAI to Write Cold Emails from Scratch with Clay

How to Run a Personalized Demo Play at Scale with Clay

Automated Slide Deck Creation: How Clay Builds QBRs from Your Data

HG Insights + Clay: B2B Technographic and Firmographic Data

B2B Cold Email Deliverability: 21 Best Practices

Basics of Google Search Operators: A Practical Guide

AI Lead Generation: The Complete B2B Guide

Clay MCP: Ops-built workflows, consumable by reps

How to Manage and Enrich Inbound Leads Automatically

GTM Alpha: How Winning Teams Build a Competitive Edge

Why Good CRM Data Matters and How Clay Helps

How to Use Formulas in Clay: AI Generator and Manual Entry

GTM Engineering: What It Is, How It Works, and How to Hire

Formulas in Clay: A Beginner's Intro for Non-Engineers

How Clay Uses Clay for SEO and AEO: 3 Systems That Scale

Turn Web Visitors into Leads: A Warm Outbound Play for B2B Sales

How to Use Web Scraping to Enrich Your Data with Clay

How to Create a Sales Prospect List in Minutes

Best B2B Email List Providers: Tested and Ranked (2026)

Outbound Sales Automation: How to 10x Pipeline Without More SDRs

The Wake the Dead Play: Reactivate Closed-Lost Deals with Clay

Three Tips to Guarantee Email Deliverability for Cold Outbound

How Clay Uses Clay for Customer Support: 3 Real Workflows

B2B Cold Email Copywriting: The Complete Guide

Introducing Clay Functions

Clay and Apollo Integration: Enrichment, Sequencing, and More

The Many Lives of Spreadsheets: A History and What Comes Next

AI recruiting strategies

How to Hire a GTM Engineer: The Complete Guide

Inside Clay's GTM Engineering Lab: Plays, Principles, and Automation

How to Build the Most Targeted Account Lists Possible

Personalized Direct Mail at Scale: The Gifting Play with Clay

How to Set Up Your Full Inbound Sales Process on Clay

AI-Enabled GTM for Private Equity: The Value Creation Playbook

Do More With Your Data: Clay's Post-Data-Provider Approach

Google Maps Lead Generation for Niche Local Businesses

24 AI Email Personalization Examples for Cold Outreach (With Prompts)

How to Ace Your Follow-Ups: A Practical Sales Guide

How to Prioritize Your Waitlist with Lead Enrichment

B2B Cold Email Templates: Frameworks That Get Replies

Audiences: now in Enterprise beta

The thinking behind our new pricing: our internal memo

Introducing Clay’s new pricing

Clay partners with Lusha and Beauhurst to expand European data coverage

Source your precise TAM from lookalikes you can trust with Ocean.io and Clay

Clay doubles down on supporting European GTM teams

In Nigeria, she built a life where money wouldn’t decide

Sculptor Analyst Mode: Turning Context-Rich Data Into Actionable GTM Insights

In a place where girls often choose between career or marriage, she carved her own path

How we designed Sculpt

Clay announces second employee tender offer in nine months at a $5B valuation

Clay is now available as a connector in Claude

Sellers have a new AI edge: Clay in ChatGPT

Clay reaches $100M ARR

Clay Certifications: Turning mastery into credentials that matter

Mobile Phone Verification Methodology

Work Email Verification Methodology

Stop Guessing, Start Analyzing: How Sculptor Turns Your GTM Data Into Your Competitive Advantage

Find and outreach local businesses with Openmart and Clay Sequencer

Announcing Web Intent

How Clay Uses Clay: Conversational Data

Sculpting GTM’s future with six major launches

Introducing Claygent Navigator

Announcing the Clay Partner Program

Introducing GPT-5 in Claygent: sharper research, stronger formulas, better outbound

Clay Series C announcement. The GTM engineering era begins now

Claygent surpasses 1 billion runs

Announcing Sculpt: Clay’s first annual user conference

Announcing custom signals at Clay

Clay announces employee tender offer led by Sequoia at $1.5B valuation

Create personalized presentations at scale with Clay and Google Slides