Post-apocalyptic education

Post-apocalyptic education

What comes after the Homework Apocalypse

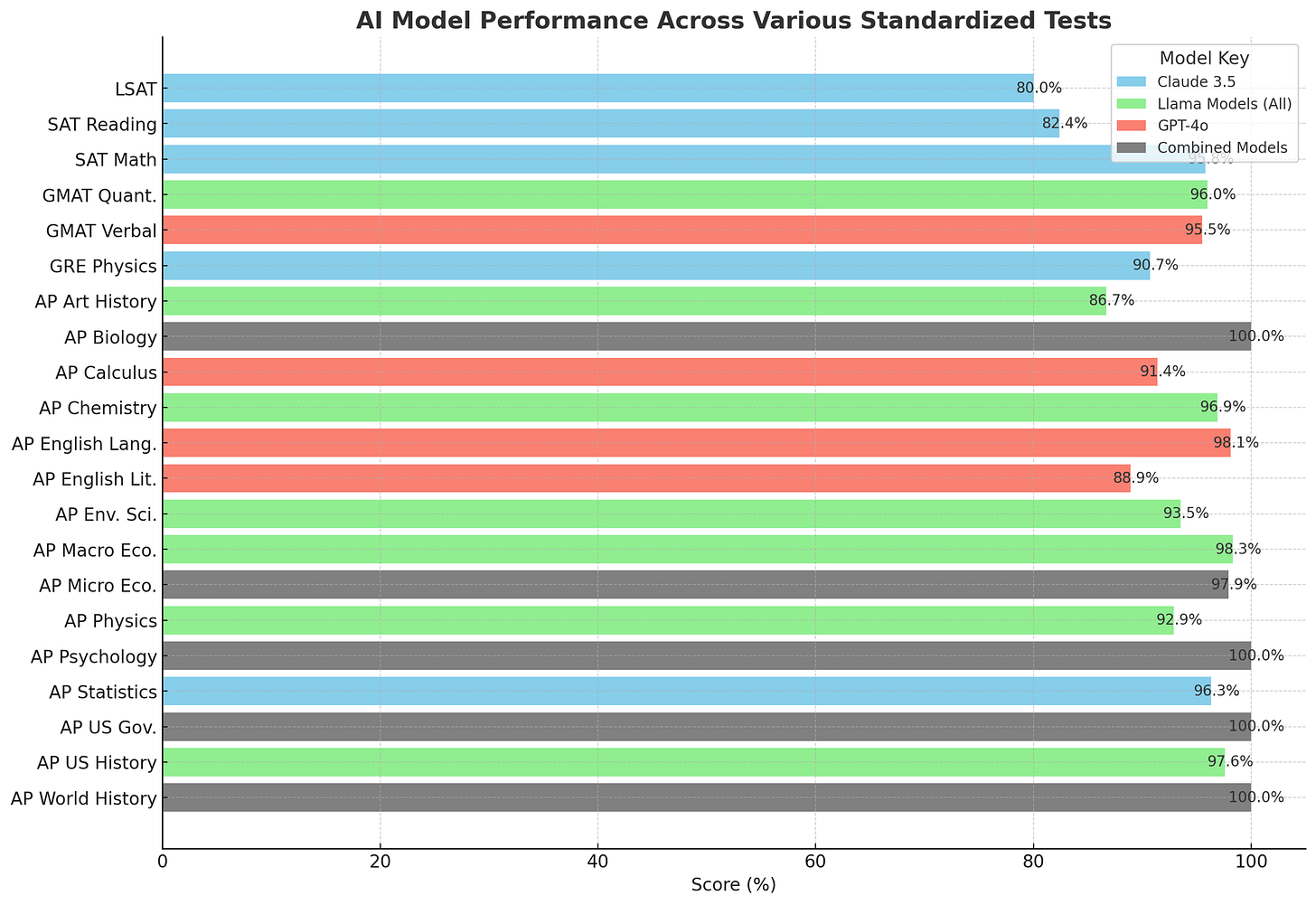

Last summer, I wrote about the Homework Apocalypse, the coming reality where AI could complete most traditional homework assignments, rendering them ineffective as learning tools and assessment measures. My prophecy has come true, and AI can now ace most tests. Yet remarkably little has changed as a result, even as AI use became nearly universal among students.

As of eight months ago, a representative survey in the US found that 82% of undergraduates and 72% of K12 students had used AI for school. That is extraordinarily rapid adoption. Of the students using AI, 56% used it for help with writing assignments, and 45% for completing other types of schoolwork. The survey found many positive uses of AI as well, which we will return to, but, for now, let’s focus on the question of AI assistance on homework. Students don’t always see getting AI help as cheating (they are simply getting answers to some tricky problem or a challenging part of an essay), but many teachers do.

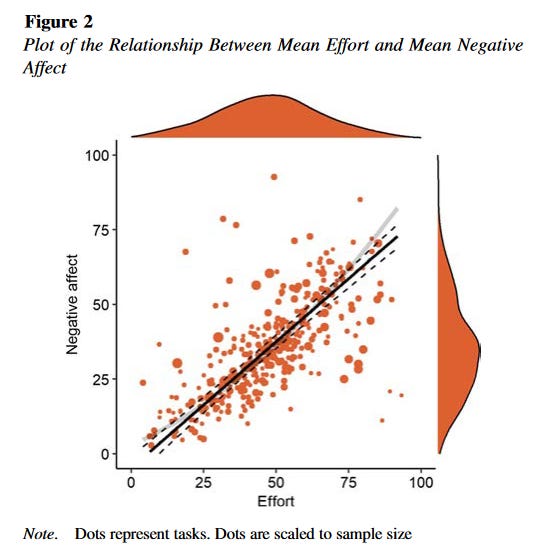

To be clear, AI is not the root cause of cheating. Cheating happens because schoolwork is hard and high stakes. And schoolwork is hard and high stakes because learning is not always fun and forms of extrinsic motivation, like grades, are often required to get people to learn. People are exquisitely good at figuring out ways to avoid things they don’t like to do, and, as a major new analysis shows, most people don’t like mental effort. So, they delegate some of that effort to the AI. In general, I am in favor of delegating tasks to AI (the subject of my new class on MasterClass), but education is different - the effort is the point.

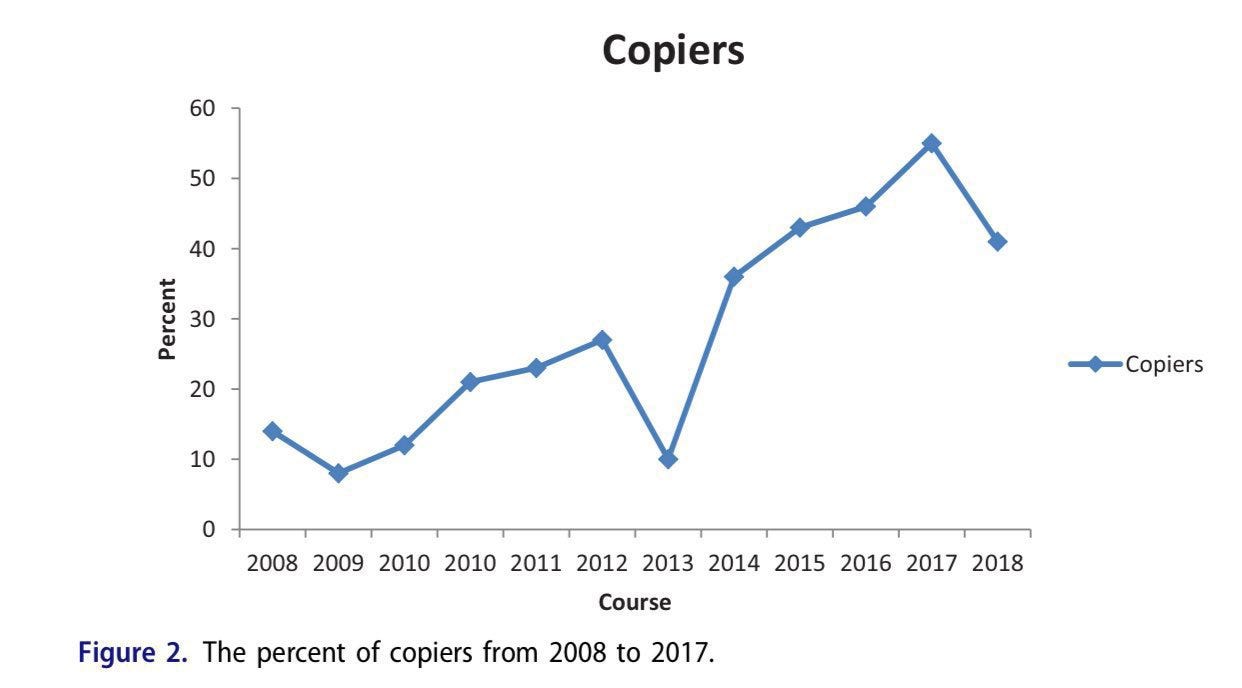

This is not a new problem. One of the first uses of any new technology has always been to get help with homework. A study of thousands of students at Rutgers found that when they did their homework in 2008, it improved test grades for 86% of them (see, homework really does help!), but homework only helped 45% of students in 2017. Why? The rise of the Internet. By 2017, a majority of students were copying internet answers, rather than doing the work themselves.

The Homework Apocalypse has already happened and may even have happened before generative AI! Why are more people not seeing this as an emergency? I think it has to do with two illusions.

The Illusions

The first illusion is the Detection Illusion: teachers believe they can still easily detect AI use, and therefore can prevent it from being used in schoolwork. This Detection Illusion leads educators to rely on outdated assessment methods, believing they can easily spot AI-generated work when in reality, the technology has far surpassed our ability to consistently identify it:

No specialized AI detectors can detect AI writing with high accuracy and without the risk of false positives, especially after multiple rounds of prompting. Even watermarks won’t help much.

People can’t detect AI writing well. Editors at top linguistics journals couldn’t. Teachers couldn’t (though they thought they could - the Illusion again). While simple AI writing might be detectable (“delve,” anyone?), there are plenty of ways to disguise “AI writing” styles through simples prompting. In fact, well-prompted AI writing is judged more human than human writing by readers.

You can’t ask an AI to detect AI writing (even though people keep trying). When asked if something written by a human was written by an AI, GPT-4 gets it wrong 95% of the time.

There are still options that preserve old assignments. Teachers can return to in-class writing, asking students to demonstrate their skills in person, or other techniques that might mitigate AI cheating through close monitoring. But, for the vast majority of teachers, doing so requires adjustment and changes that have yet to be made. To date, few have actually reacted to the shattering of the illusion of AI detection by shifting how they approach teaching and assessment.

While teachers grapple with the Detection Illusion, students face their own misconception: Illusory Knowledge. They don’t actually realize that getting help with homework is undermining their learning. After all, they are getting advice and answers from the AI that help them solve problems, which feels like fluency. As the authors of the study at Rutgers wrote: “There is no reason to believe that the students are aware that their homework strategy lowers their exam score... they make the commonsense inference that any study strategy that raises their homework quiz score raises their exam score as well.”

The same thing appears to be happening with AI, as a study by some of my colleagues at Penn discovered. They conducted an experiment at a high school in Turkey where some students were given access to GPT-4 to help with homework, either through the standard ChatGPT interface (no prompt engineering) or using ChatGPT with a tutor prompt. Student homework scores shot up, but the use of unprompted standard ChatGPT to help with homework undermined learning by acting like a crutch. Even though students thought they learned a lot from using ChatGPT, they actually learned less - scoring 17% worse on their final exam.

Despite this, the survey I quoted earlier found that 59% of teachers see AI as positive for learning, and I don’t think they are wrong. While just using AI as a crutch can hurt learning, more careful use of AI is different. We can see signs of this in the Turkey study, which found that giving students a GPT with a basic tutor prompt for ChatGPT, instead of having them use ChatGPT on their own, boosted homework scores without lowering final exam grades. Plus, a study done in a massive programming class at Stanford that found use of ChatGPT led to increased, not decreased, exam grades.

And, of course, students are not using AI just to do their homework. They are getting aid in understanding complex topics, brainstorming ideas, refreshing their knowledge, creating new forms of creative work, getting feedback, getting advice, and so much more. Focusing just on the question of homework, and the illusions it fosters, can discourage us from making progress.

Encouraging, not replacing, thinking

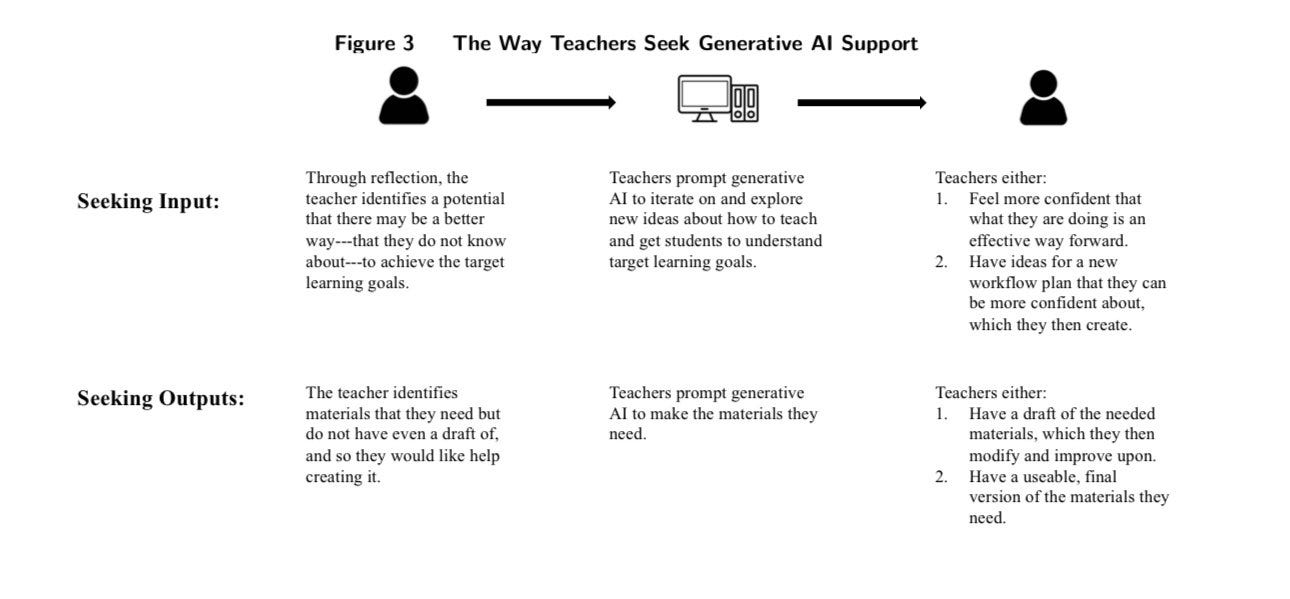

To do so we need to center teachers in the process of using AI, rather than just leaving AI to students (or to those who dream of replacing teachers entirely). We know that almost three-quarters of teachers are already using AI for work, but we have just started to learn the most effective ways for teachers to use AI. A recent deep qualitative study of teachers found that teachers who used AI for both output (create a worksheet, develop a quiz) and to help with input (help me think through what makes a Great American novel, give me ways to explain positive and negative numbers) get more value than if they use AI for producing output alone. This points to a useful path forward in AI for education, using it as a co-intelligence and tool for helping humans do better thinking.

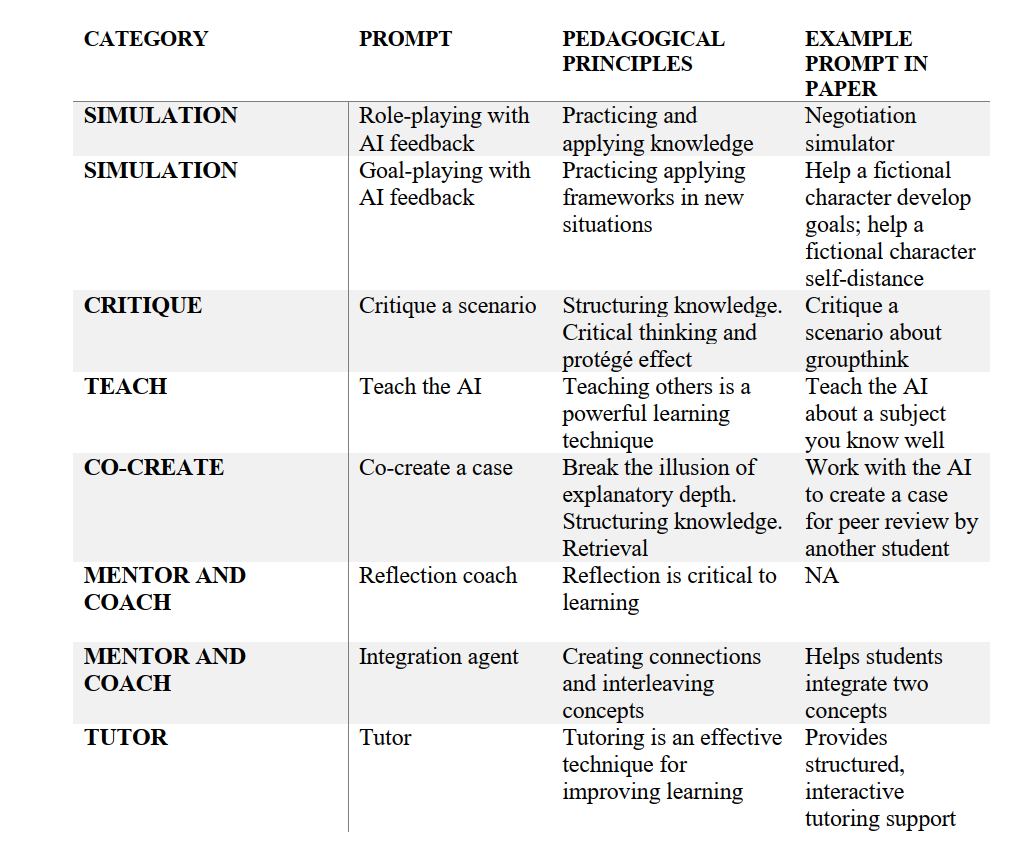

Increasingly, AI is being used in the same way for students, pushing them to think, rather than use AI as a crutch. For example, we have released multiple prompts, all under a free Creative Commons license, that instructors can customize or modify for their classrooms (here is deep dive into one of them - a simulator prompt). These sorts of prompts are designed to expose Illusory Knowledge, forcing students to confront what they know and don’t know. Many other educators are designing similar exercises. In doing so, we can take advantage of what makes AI so promising for teaching - its ability to produce customized learning experiences that meet students where they are, and which are broadly accessible in ways that past forms of educational technology never were.

The integration of AI in education is not a future possibility—it's our present reality. This shift demands more than passive acceptance or futile resistance. It requires a fundamental reimagining of how we teach, learn, and assess knowledge. As AI becomes an integral part of the educational landscape, our focus must evolve. The goal isn't to outsmart AI or to pretend it doesn't exist, but to harness its potential to enhance education while mitigating the downside. The question now is not whether AI will change education, but how we will shape that change to create a more effective, equitable, and engaging learning environment for all.